In the chart below I show job growth per 1000 residents from July 2010 to June 2011.

The best performance is coming from poor, heavily rural states. Remember how I said that rural areas shed jobs from 2002 to 2009? That seems to have been completely turned on its head. The only way I can make sense of the latest numbers is that rural areas seem to be adding a lot of jobs. This is obviously due to higher commodity prices, especially higher farm prices.

A Rural Boom?

Each economic upswing seems to have a theme. In the 90s it was the internet, and in the 00s it was housing. I think that one of the themes in the next upswing is going to be a boom in rural economies. Perhaps the next bubble will be in the price of farmland.

Job creation in rural areas also explains why many of the jobs created are low wage, as this New York Times story reports. Those are the kind of jobs that rural areas tend to have.

Rural economies have been through many years of hard times and it will take many good years before these areas start to look prosperous. Republican voters are more likely to be rural than Democrats. If people see hiring in their local economies, they are more likely to oppose government spending on stimulus. That may explain some of our political divide.

Wednesday, August 3, 2011

Monday, August 1, 2011

California versus Texas: Part 7: Conclusions

So what is Texas doing right? The first thing to say is that, like California, it is a heavily urbanized state. Over the past decade, rural areas everywhere shed jobs. Higher food prices may be changing that picture, and I will address that in a future post.

The next thing to point out is that Texas receives a big boost from being a right-to-work state. Educational levels and wages are average, but low housing prices keep standards of living high.

I think that California's big mistake is letting housing prices get too high. This drives people out of the state and drives up labor costs. For a while a house worked as a third source of income for Californian families, but this is no longer happening. Despite better wages, Californians aren't doing any better than Texans once housing costs are paid for. High labor costs drive employers away.

California's costs will never be as low as Texas, but Texas really isn't our natural competitor. We need to compete with the East Coast. If California could lower our housing costs and raise the number of college graduates in our workforce, then sunshine and scenery would do the rest.

Exploding the Rick Perry myth

We are told that Texas Governor Perry has a great jobs story to tell. Texas has done well, but I don't think Perry had much to do with it. The Texas economy was doing great before Rick Perry showed up as governor. Below I have charted the job creating performance of several states during the 1990s. I use March 1991 to January 2002 to try to match the lows of the economic cycle. Right-to-work states are in red.

Once again Texas is a top performer. The best way to have a great jobs story is to inherit one.

California did much better in the 90s than it did in the 00s. The state's economic performance has now deteriorated to New York levels.

The Washington state anomaly

One reason I worked out some numbers for the 90s is that I wanted to see if Washington state did as well in the 90s as it did in the 00s. While not matching the performance of job creation superstars like Utah and Arizona, Washington state finished just behind Texas. That is a very good performance for a moderately high wage, union friendly state. I don't know if this is just the influence of Boeing and Microsoft, or if something else is going on.

Things that don't seem matter much

I thought that the amount of federal spending per dollar collected in taxes would have a strong economic impact. It seemed reasonable that places that have a net inflow of money from the government would have more jobs. I looked for this effect and didn't find it. Great economies are not built on government pork.

I wondered if the size of state government would have an impact. Nevada and Florida have an exceptionally low state tax burden. Their economic performance is good but not exceptional. I don't think that the level of state taxes matters as much as Republicans think. Education, on the other hand, is very definitely important.

I also looked to see if having very large cities or having hub airports mattered. I didn't see any evidence of that, although I will have to do an Metropolitan Statistical Area based analysis before I can say that these things don't matter at all.

In my next post I will write about where jobs are being created in 2011.

(1991 Population data from: http://www.census.gov/popest/archives/1990s/ST-99-03.txt)

The next thing to point out is that Texas receives a big boost from being a right-to-work state. Educational levels and wages are average, but low housing prices keep standards of living high.

I think that California's big mistake is letting housing prices get too high. This drives people out of the state and drives up labor costs. For a while a house worked as a third source of income for Californian families, but this is no longer happening. Despite better wages, Californians aren't doing any better than Texans once housing costs are paid for. High labor costs drive employers away.

California's costs will never be as low as Texas, but Texas really isn't our natural competitor. We need to compete with the East Coast. If California could lower our housing costs and raise the number of college graduates in our workforce, then sunshine and scenery would do the rest.

Exploding the Rick Perry myth

We are told that Texas Governor Perry has a great jobs story to tell. Texas has done well, but I don't think Perry had much to do with it. The Texas economy was doing great before Rick Perry showed up as governor. Below I have charted the job creating performance of several states during the 1990s. I use March 1991 to January 2002 to try to match the lows of the economic cycle. Right-to-work states are in red.

Once again Texas is a top performer. The best way to have a great jobs story is to inherit one.

California did much better in the 90s than it did in the 00s. The state's economic performance has now deteriorated to New York levels.

The Washington state anomaly

One reason I worked out some numbers for the 90s is that I wanted to see if Washington state did as well in the 90s as it did in the 00s. While not matching the performance of job creation superstars like Utah and Arizona, Washington state finished just behind Texas. That is a very good performance for a moderately high wage, union friendly state. I don't know if this is just the influence of Boeing and Microsoft, or if something else is going on.

Things that don't seem matter much

I thought that the amount of federal spending per dollar collected in taxes would have a strong economic impact. It seemed reasonable that places that have a net inflow of money from the government would have more jobs. I looked for this effect and didn't find it. Great economies are not built on government pork.

I wondered if the size of state government would have an impact. Nevada and Florida have an exceptionally low state tax burden. Their economic performance is good but not exceptional. I don't think that the level of state taxes matters as much as Republicans think. Education, on the other hand, is very definitely important.

I also looked to see if having very large cities or having hub airports mattered. I didn't see any evidence of that, although I will have to do an Metropolitan Statistical Area based analysis before I can say that these things don't matter at all.

In my next post I will write about where jobs are being created in 2011.

(1991 Population data from: http://www.census.gov/popest/archives/1990s/ST-99-03.txt)

Sunday, July 31, 2011

California versus Texas: Part 6: The model

The first thing to say is that my model isn't wildly precise. However, where it predicts lots of job growth there is job growth, and where it predicts lots of job losses there are job losses. It works well enough to reassure me that I am looking at the right things.

The real national economy is very complex and very diverse, so a simple formula is never going to give precise answers. However, to have any success at all, that simple formula has to get some big things right.

There are a few states where it fails badly, but even so it fails in an interesting way. Kansas and Nebraska are neighbors, and the model greatly over predicts job growth in both states. There seems to be some regional factor at work. West Virginia and Kentucky do much, much better than predicted. Both are coal mining states with a lot of labor intensive underground mining, and coal prices have risen dramatically in the last ten years. And then there is Washington state, which does much better than expected. Washington state is an anomaly in many ways, and I don't know why. It might be due to the presence of Boeing and Microsoft. The state has very good pay after taxes and housing costs are taken out. While the congressional delegation looks like California, the state tax system looks more like Texas. Washington state is either lucky or they are doing something right which my model doesn't capture.

How it works

The idea behind my model is that job growth will happen if employees are profitable. If an employee produces $50,000 per year of output, but only costs $40,000 per year to the company, then a company will tend to expand and add jobs. The rate of job growth is proportional to profitability. So a state that has a well educated workforce working for moderate wages will see job growth.

I calculate a labor force value which increases with the percentage of the the work force which is college educated. If the state is a right-to-work state that increases the labor force value by another 17%.

Labor force value = 22845 + (775 * Percentage college educated)

I then subtract the mean wage to get profit, and multiply that by a factor to get employment growth.

Job growth = (Labor force value - mean wage) * 6/1000

I then subtract the job losses due to rural populations, and add the regional adjustment, if any. The final formula is:

Projected job growth = ((Labor force vale - mean wage) * 6/1000) - Percentage of population which is rural - Regional correction

Examples

California has an average wage of $50730, 6% of the population is rural, and 30% of the workforce has a bachelor's degree. It is not a right to work state. The regional correction is +30.

Labor force value $ = 22845 + (775 * 30) = 46095

Projected job growth = (46095 - 50730) *6/1000 - 6 + 30 = 3.8

The real national economy is very complex and very diverse, so a simple formula is never going to give precise answers. However, to have any success at all, that simple formula has to get some big things right.

There are a few states where it fails badly, but even so it fails in an interesting way. Kansas and Nebraska are neighbors, and the model greatly over predicts job growth in both states. There seems to be some regional factor at work. West Virginia and Kentucky do much, much better than predicted. Both are coal mining states with a lot of labor intensive underground mining, and coal prices have risen dramatically in the last ten years. And then there is Washington state, which does much better than expected. Washington state is an anomaly in many ways, and I don't know why. It might be due to the presence of Boeing and Microsoft. The state has very good pay after taxes and housing costs are taken out. While the congressional delegation looks like California, the state tax system looks more like Texas. Washington state is either lucky or they are doing something right which my model doesn't capture.

How it works

The idea behind my model is that job growth will happen if employees are profitable. If an employee produces $50,000 per year of output, but only costs $40,000 per year to the company, then a company will tend to expand and add jobs. The rate of job growth is proportional to profitability. So a state that has a well educated workforce working for moderate wages will see job growth.

I calculate a labor force value which increases with the percentage of the the work force which is college educated. If the state is a right-to-work state that increases the labor force value by another 17%.

Labor force value = 22845 + (775 * Percentage college educated)

I then subtract the mean wage to get profit, and multiply that by a factor to get employment growth.

Job growth = (Labor force value - mean wage) * 6/1000

I then subtract the job losses due to rural populations, and add the regional adjustment, if any. The final formula is:

Projected job growth = ((Labor force vale - mean wage) * 6/1000) - Percentage of population which is rural - Regional correction

Examples

California has an average wage of $50730, 6% of the population is rural, and 30% of the workforce has a bachelor's degree. It is not a right to work state. The regional correction is +30.

Labor force value $ = 22845 + (775 * 30) = 46095

Projected job growth = (46095 - 50730) *6/1000 - 6 + 30 = 3.8

California versus Texas:Part 5: Rural job losses and regional corrections

The Rural Population Effect

Studying the data I noticed that the states with high job growth all seemed to be highly urbanized. On the other hand there were states that seemed to be doing everything right, where the job growth was mediocre. The thing that stood out about those states is that they had large rural populations.

This chart shows job growth versus the percentage of the population which is urbanized. States with low urbanization never seem to do well. Highly urbanized states can do well, or they can do badly.

Over the past decade, rural areas seem to lose jobs at the rate of 1 job per 1000 residents per percentage point of rural population. So I would expect a state with a 40 percent rural population to lose 40 jobs per thousand state residents over the cycle from this rural population effect.

These rural job losses are consistent with what is known about strong productivity gains in industries like farming. I think that this is the tail end of a process that has been going on for over a hundred years. In 1880 agriculture accounted for over 41% of US employment. People had to live on the land they were farming, so a scattered rural population made sense. Today, only 1% of the US population works in farming, and a scattered rural population no longer makes economic sense unless commodity prices rise. For the past century, towns and cities have been the engines of the economy.

It is important to note that the Census Bureau's definition of urbanization includes some quite small communities. Anybody living in a town of more than 2500 people is an urban resident.

California is one of the most urbanized states, with 94% of the population living in urban areas. Texas is at a slight disadvantage, with 83% of the population urban.

Regional Corrections

When I was a kid, I had a small tear off calendar with a different humorous quote for each day. One that I have never forgotten was the definition of Flannagan's Finagling Factor. This is:

'That quantity which, when multiplied by, divided by, added to, or subtracted from the answer you got, gives you the answer you should have gotten.'

I'm going to have to introduce a few of these to make the numbers work out, but I promise to only use them across broad geographic regions, and there are only 3. What I think they represent is job creating factors that my model doesn't include.

The first one is for Alaska. My model predicts Alaska should be losing lots of jobs, when in fact it is gaining a lot. I think this is because Alaska is a state with few people and lots of oil. This one is +116 jobs/ 1000 population.

The second one is for the Rocky Mountain west. This one is +18 jobs / 1000 population. This area includes Montana, Idaho, Nevada, Utah, and Colorado. This might have something to due with mining and high commodity prices.

The third one covers three regions. These are the West Coast, the states along the Mexican border, and the East Coast from Virginia north to New England. This one is +30 jobs /1000 population. This might have something to do with the growth of international trade. The states involved are Washington, Oregon, California, Arizona, New Mexico, Texas, Virginia, Maryland, Delaware, Pennsylvania, New Jersey, New York, Connecticut, Rhode Island, Massachusetts, Vermont, New Hampshire and Maine.

These regional corrections are the final element. In my next post I will show the predicted job growth and compared it with the actual job growth.

( Data on urbanization is from Table 27 of the Statistical Abstract of the US from the Census Bureau. I'm using 2006 numbers.)

Studying the data I noticed that the states with high job growth all seemed to be highly urbanized. On the other hand there were states that seemed to be doing everything right, where the job growth was mediocre. The thing that stood out about those states is that they had large rural populations.

This chart shows job growth versus the percentage of the population which is urbanized. States with low urbanization never seem to do well. Highly urbanized states can do well, or they can do badly.

Over the past decade, rural areas seem to lose jobs at the rate of 1 job per 1000 residents per percentage point of rural population. So I would expect a state with a 40 percent rural population to lose 40 jobs per thousand state residents over the cycle from this rural population effect.

These rural job losses are consistent with what is known about strong productivity gains in industries like farming. I think that this is the tail end of a process that has been going on for over a hundred years. In 1880 agriculture accounted for over 41% of US employment. People had to live on the land they were farming, so a scattered rural population made sense. Today, only 1% of the US population works in farming, and a scattered rural population no longer makes economic sense unless commodity prices rise. For the past century, towns and cities have been the engines of the economy.

It is important to note that the Census Bureau's definition of urbanization includes some quite small communities. Anybody living in a town of more than 2500 people is an urban resident.

California is one of the most urbanized states, with 94% of the population living in urban areas. Texas is at a slight disadvantage, with 83% of the population urban.

Regional Corrections

When I was a kid, I had a small tear off calendar with a different humorous quote for each day. One that I have never forgotten was the definition of Flannagan's Finagling Factor. This is:

'That quantity which, when multiplied by, divided by, added to, or subtracted from the answer you got, gives you the answer you should have gotten.'

I'm going to have to introduce a few of these to make the numbers work out, but I promise to only use them across broad geographic regions, and there are only 3. What I think they represent is job creating factors that my model doesn't include.

The first one is for Alaska. My model predicts Alaska should be losing lots of jobs, when in fact it is gaining a lot. I think this is because Alaska is a state with few people and lots of oil. This one is +116 jobs/ 1000 population.

The second one is for the Rocky Mountain west. This one is +18 jobs / 1000 population. This area includes Montana, Idaho, Nevada, Utah, and Colorado. This might have something to due with mining and high commodity prices.

The third one covers three regions. These are the West Coast, the states along the Mexican border, and the East Coast from Virginia north to New England. This one is +30 jobs /1000 population. This might have something to do with the growth of international trade. The states involved are Washington, Oregon, California, Arizona, New Mexico, Texas, Virginia, Maryland, Delaware, Pennsylvania, New Jersey, New York, Connecticut, Rhode Island, Massachusetts, Vermont, New Hampshire and Maine.

These regional corrections are the final element. In my next post I will show the predicted job growth and compared it with the actual job growth.

( Data on urbanization is from Table 27 of the Statistical Abstract of the US from the Census Bureau. I'm using 2006 numbers.)

Friday, July 29, 2011

California versus Texas: Part 4: Wages and Housing cost

I think there are two factors that drive wage levels. On the demand side, the employer side, there is the education level of the workforce. On the supply side, the worker side, I think housing costs are an important driver of wages. People can always pack up and move to another state if they don't like their standard of living after taxes and housing costs have been paid.

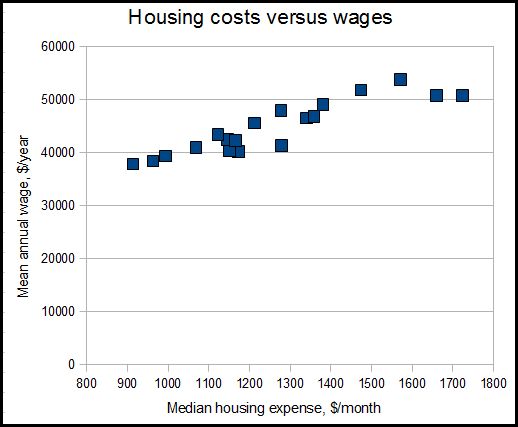

This chart demonstrates the correlation between housing costs and wages for 20 states.

New Jersey and California have the highest costs, while Iowa has the lowest. Affordable housing keeps workers happy even if wages aren't the best. I think that California, New Jersey and New York could be doing much better if their housing became more affordable.

The next chart shows housing costs versus job growth over the last economic cycle. Very expensive and very cheap housing seems to be associated with low job growth

In order to see which state provides the best standard of living I looked at two median wage earners in one median house. I assume they file taxes separately and have no children. I used a web based paycheck calculator to find their take home pay after taxes, then I deducted the monthly housing expense. I ignore the mortgage interest tax deduction.

California and Texas come out in the middle of the pack with a similar standard of living to each other. It is important to remember that there are huge differences in housing costs between different areas of California, so this might not apply to any specific Californian city.

Washington state does extremely well. My jobs model doesn't work at all for Washington state. It is an anomaly in many ways, and I don't know why.

Iowa has the lowest wages of any of the states listed, but makes up for it with very cheap housing. Utah workers work cheap, and Utah has one of the best job growth rates of any state.

The bottom line here is that affordable housing keeps wages down and living standards high.

( Important Data Note: For housing cost I'm using the median selected monthly owner cost from 2003 (Table 957) from the 2006 Statistical Abstract published by the Census Bureau. Based on a review of the Case-Shiller indices, current housing prices are close to 2003 levels in most areas. However, Nevada, Minnesota and Michigan are significantly cheaper today than in 2003. Except for those states, the cost I use should be within $2500 of the cost in 2011. These owner costs include mortgage, property taxes and utilities.

The web based paycheck calculator I used is PaycheckCity)

This chart demonstrates the correlation between housing costs and wages for 20 states.

New Jersey and California have the highest costs, while Iowa has the lowest. Affordable housing keeps workers happy even if wages aren't the best. I think that California, New Jersey and New York could be doing much better if their housing became more affordable.

The next chart shows housing costs versus job growth over the last economic cycle. Very expensive and very cheap housing seems to be associated with low job growth

Which state is best?

California and Texas come out in the middle of the pack with a similar standard of living to each other. It is important to remember that there are huge differences in housing costs between different areas of California, so this might not apply to any specific Californian city.

Washington state does extremely well. My jobs model doesn't work at all for Washington state. It is an anomaly in many ways, and I don't know why.

Iowa has the lowest wages of any of the states listed, but makes up for it with very cheap housing. Utah workers work cheap, and Utah has one of the best job growth rates of any state.

The bottom line here is that affordable housing keeps wages down and living standards high.

( Important Data Note: For housing cost I'm using the median selected monthly owner cost from 2003 (Table 957) from the 2006 Statistical Abstract published by the Census Bureau. Based on a review of the Case-Shiller indices, current housing prices are close to 2003 levels in most areas. However, Nevada, Minnesota and Michigan are significantly cheaper today than in 2003. Except for those states, the cost I use should be within $2500 of the cost in 2011. These owner costs include mortgage, property taxes and utilities.

The web based paycheck calculator I used is PaycheckCity)

Thursday, July 28, 2011

California versus Texas: Part 3: Education

The education level of a state's workforce is strongly correlated with salaries. While I think that good high school education is important, it is the percentage of college graduates that seems to determine the value of the workforce.

Massachusetts, Connecticut and New York are the best educated states, and they have the highest wages. 38% of Massachusetts residents have a degree, and they have an average wage of $53,700.

California has the sixth highest wages at $50730 and 30% of the workforce has a degree. Eight other states have both lower wages and a better educated workforce than California.

California does especially badly when looking at the percentage of the workforce who graduated high school. Only Texas and Mississippi do worse than California. Texas earns $42220 and 25% of the workforce has a degree. Mississippi has the lowest wages at $33930 and only 19% of the workforce has a degree.

I'm very worried for California. We have an expensive workforce with a doubtful quality of education. Guess which area has been heavily affected by budget cuts in the past few years? Schools and universities!!! Workers are unlikely to reduce their wages, so employers may leave the state if the educational level of the workforce falls further.

Texas is less well educated than California, but they have much lower wages to compensate for that.

How much does education boost the value of a workforce? In my model I use:

Labor force value ($/year) = 22845 + (775 * (Percentage of workforce with a college degree))

In my model boosting the number of people with a degree by 5% is worth about $4000 extra in mean annual wage, all else being equal. I haven't checked to see if this agrees with values published by economists.

(Educational attainment is 2008 data from the Census Bureau, Wage data is for May 2010 from the Bureau of Labor Statistics)

Massachusetts, Connecticut and New York are the best educated states, and they have the highest wages. 38% of Massachusetts residents have a degree, and they have an average wage of $53,700.

California has the sixth highest wages at $50730 and 30% of the workforce has a degree. Eight other states have both lower wages and a better educated workforce than California.

California does especially badly when looking at the percentage of the workforce who graduated high school. Only Texas and Mississippi do worse than California. Texas earns $42220 and 25% of the workforce has a degree. Mississippi has the lowest wages at $33930 and only 19% of the workforce has a degree.

I'm very worried for California. We have an expensive workforce with a doubtful quality of education. Guess which area has been heavily affected by budget cuts in the past few years? Schools and universities!!! Workers are unlikely to reduce their wages, so employers may leave the state if the educational level of the workforce falls further.

Texas is less well educated than California, but they have much lower wages to compensate for that.

How much does education boost the value of a workforce? In my model I use:

Labor force value ($/year) = 22845 + (775 * (Percentage of workforce with a college degree))

In my model boosting the number of people with a degree by 5% is worth about $4000 extra in mean annual wage, all else being equal. I haven't checked to see if this agrees with values published by economists.

(Educational attainment is 2008 data from the Census Bureau, Wage data is for May 2010 from the Bureau of Labor Statistics)

California versus Texas: Part 2: The power of right-to-work legislation

For those unfamiliar with them, right-to-work laws greatly reduce the power of unions. Below I have the same chart as in the first post. I indicate the right-to-work states by coloring their bars red.

There is a very strong correlation between right-to-work laws and job creation. There are a couple of southern states that have lost jobs despite being right-to-work, but those states have major handicaps associated with poorly educated and rural populations.

I think that companies really do not want to have to deal with strong unions, and that right-to -work laws enhance the value of the workforce to the employer. My job creation model uses a 17% enhancement in value, which is equivalent to workers being willing to work for $6000 less than they actually receive in pay.

This is not due to people having to work for less. Given two workforces of equal educational levels and equal pay, the workforce in the right-to-work state is worth a lot more to an employer.

Correlation or Causation?

It is possible I suppose that right-to-work laws are correlated with something else which is responsible for the job growth. However, there is plenty of qualitative evidence that companies favor right-to-work states for expansion. Hyundai built their factories in Alabama. Toyota went for San Antonio for their new pick-up truck plant. BMW went to South Carolina and Mercedes went to Alabama.

Seattle based aircraft maker Boeing is going to great lengths to get away from their unionized, Washington state workforce. Despite having enviable pay and perks, this workforce goes on strike every few years for more money. Management and the union hate each other.

On their new 787 airliner, Boeing outsourced most of the production to factories in Italy, Japan and South Carolina. South Carolina is a right-to-work state. Despite trouble recruiting qualified workers in South Carolina, Boeing is going ahead with a major expansion of that plant. They are putting a final assembly line there, which will allow them to deliver 787s without any of the parts passing through Seattle.

Does right-to-work create jobs or just move them around?

It is certainly possible that right-to-work states are just stealing jobs from union friendly states. However, as the 787 project shows, jobs that leave union-friendly states can always go overseas. I think that both job creation and job shifting are factors in the success of right-to-work states.

(Blog note: I'm on Twitter as Schrodinger333 and will try to remember to send out a Tweet whenever I put up a new blog post)

There is a very strong correlation between right-to-work laws and job creation. There are a couple of southern states that have lost jobs despite being right-to-work, but those states have major handicaps associated with poorly educated and rural populations.

I think that companies really do not want to have to deal with strong unions, and that right-to -work laws enhance the value of the workforce to the employer. My job creation model uses a 17% enhancement in value, which is equivalent to workers being willing to work for $6000 less than they actually receive in pay.

This is not due to people having to work for less. Given two workforces of equal educational levels and equal pay, the workforce in the right-to-work state is worth a lot more to an employer.

Correlation or Causation?

It is possible I suppose that right-to-work laws are correlated with something else which is responsible for the job growth. However, there is plenty of qualitative evidence that companies favor right-to-work states for expansion. Hyundai built their factories in Alabama. Toyota went for San Antonio for their new pick-up truck plant. BMW went to South Carolina and Mercedes went to Alabama.

Seattle based aircraft maker Boeing is going to great lengths to get away from their unionized, Washington state workforce. Despite having enviable pay and perks, this workforce goes on strike every few years for more money. Management and the union hate each other.

On their new 787 airliner, Boeing outsourced most of the production to factories in Italy, Japan and South Carolina. South Carolina is a right-to-work state. Despite trouble recruiting qualified workers in South Carolina, Boeing is going ahead with a major expansion of that plant. They are putting a final assembly line there, which will allow them to deliver 787s without any of the parts passing through Seattle.

|

| Boeing hated their union so much, they built this plane to fly wings and fuselages in from elsewhere!!! |

Does right-to-work create jobs or just move them around?

It is certainly possible that right-to-work states are just stealing jobs from union friendly states. However, as the 787 project shows, jobs that leave union-friendly states can always go overseas. I think that both job creation and job shifting are factors in the success of right-to-work states.

(Blog note: I'm on Twitter as Schrodinger333 and will try to remember to send out a Tweet whenever I put up a new blog post)

Tuesday, July 26, 2011

California versus Texas: Why some states grow jobs and others don't

There's been a lot of talk recently about the success of Texas in creating jobs while the rest of the country stagnates. The Federal Reserve Bank of Dallas recently claimed that 37% of all net new jobs created in the last two years in the US were in Texas. Some credit this to the leadership of Texas governor, and potential Presidential candidate, Rick Perry.

Meanwhile, California, once America's most dynamic state, has become an example of stagnation. Conservatives point to us as an example of the damage that can be done by environmentalism and high taxes.

Is Texas really as good as they say?

All the discussion about Texas so far has missed a rather important point. Texas creates a lot of jobs because it is big! To properly compare states, I have calculated the job growth per thousand residents over the past economic cycle for each of the 50 states. I'm interested in sustainable and long term job growth, so I am using an entire economic cycle, comparing the employment low of the 2001 recession with the employment low of the recession that began in 2008.

It turns out that Texas is not the best job creator in the country. That honor goes to Arizona, which generated 69 jobs per 1000 people who lived there in 2002. Janet Napolitano , take a bow! Amid the wreckage left behind by the housing collapse, it is easy to overlook the vast number of jobs created in Arizona over the past cycle. Even after losing many jobs in the recession, Arizona created more jobs over the period than any other state. Then comes Utah, where Jon Huntsman was governor. Then come Washington and Virginia. Texas is next, at 46 jobs per 100. So Texas is a good job creator, but other places have done better.

There is another side to this story. Rather a lot of states actually lost jobs over the past decade. California, Illinois, Massachusetts and New Jersey are some of the largest and wealthiest states in the country, but all are in slow economic decline. California lost 5 jobs per 1000 residents over the past cycle, even as the population grew.

The worst story is in the Midwest. Missouri, Ohio and Michigan experienced an economic meltdown. Michigan was the worst, losing 50 jobs per 1000 residents. That is as many jobs lost as Texas gained!

Why is this happening?

In a recent Room for Debate at the New York Times, Pia Orrenius from the Federal Reserve Bank of Dallas credited Texas's oil deposits, ports and low cost of living. Columnist Paul Krugman proposed three possible models on his blog. They involve either a reduction in wages, cheap housing or a surge in productivity due to the policies of Rick Perry.

I think it is really important right now to understand why some places create jobs and others don't. America's 50 states offer a kind of natural economic laboratory for understanding which job creation strategies are likely to work. I've been digging into the data, and have arrived at a model which up to a point can explain the differences in job creation by states over the last economic cycle. I think that most of differences in job growth between states over the past economic cycle can be determined from five variables. Getting to that formula will take me quite a few blog posts, so be patient!

(Technical notes: Job data from Department of Labor . Population data from the Census Bureau. Calculations use state populations as of 7/1/2002. I am measuring jobs from trough to trough. In many places that is 2002 to late 2009. In others it is 2003 to 2010

Meanwhile, California, once America's most dynamic state, has become an example of stagnation. Conservatives point to us as an example of the damage that can be done by environmentalism and high taxes.

Is Texas really as good as they say?

All the discussion about Texas so far has missed a rather important point. Texas creates a lot of jobs because it is big! To properly compare states, I have calculated the job growth per thousand residents over the past economic cycle for each of the 50 states. I'm interested in sustainable and long term job growth, so I am using an entire economic cycle, comparing the employment low of the 2001 recession with the employment low of the recession that began in 2008.

It turns out that Texas is not the best job creator in the country. That honor goes to Arizona, which generated 69 jobs per 1000 people who lived there in 2002. Janet Napolitano , take a bow! Amid the wreckage left behind by the housing collapse, it is easy to overlook the vast number of jobs created in Arizona over the past cycle. Even after losing many jobs in the recession, Arizona created more jobs over the period than any other state. Then comes Utah, where Jon Huntsman was governor. Then come Washington and Virginia. Texas is next, at 46 jobs per 100. So Texas is a good job creator, but other places have done better.

There is another side to this story. Rather a lot of states actually lost jobs over the past decade. California, Illinois, Massachusetts and New Jersey are some of the largest and wealthiest states in the country, but all are in slow economic decline. California lost 5 jobs per 1000 residents over the past cycle, even as the population grew.

The worst story is in the Midwest. Missouri, Ohio and Michigan experienced an economic meltdown. Michigan was the worst, losing 50 jobs per 1000 residents. That is as many jobs lost as Texas gained!

Why is this happening?

In a recent Room for Debate at the New York Times, Pia Orrenius from the Federal Reserve Bank of Dallas credited Texas's oil deposits, ports and low cost of living. Columnist Paul Krugman proposed three possible models on his blog. They involve either a reduction in wages, cheap housing or a surge in productivity due to the policies of Rick Perry.

I think it is really important right now to understand why some places create jobs and others don't. America's 50 states offer a kind of natural economic laboratory for understanding which job creation strategies are likely to work. I've been digging into the data, and have arrived at a model which up to a point can explain the differences in job creation by states over the last economic cycle. I think that most of differences in job growth between states over the past economic cycle can be determined from five variables. Getting to that formula will take me quite a few blog posts, so be patient!

(Technical notes: Job data from Department of Labor . Population data from the Census Bureau. Calculations use state populations as of 7/1/2002. I am measuring jobs from trough to trough. In many places that is 2002 to late 2009. In others it is 2003 to 2010

Tuesday, March 22, 2011

Fukushima, Japan nuclear news update

Conditions appear to have stabilized on site. Grid power is now available in all the units. However, electrical equipment still must be checked before it can be switched on. Obviously, getting cooling systems back into operation is a priority. The lights are on in the control room for reactor three, and bringing this back into service will enable better monitoring of the situation. Operations to add water to the spent fuel pools of Reactor 3 and 4 continues. A concrete pumper with an extending boom has been brought in to provide water at unit 4. However, aerial surveillance suggests that water doesn't stay in the pools, and that they are leaking.

Some radiation has been detected leaking into the ocean.

The bottom line is that the situation is stable but still not safe.

When the Reactor buildings at unit 2 and unit 4 suffered explosions on March 15th, the blasts occurred within 6 minutes of each other. I think that this is odd. Perhaps it indicates that the explosions were somehow linked.

pdf status report from JAIF

Some radiation has been detected leaking into the ocean.

The bottom line is that the situation is stable but still not safe.

When the Reactor buildings at unit 2 and unit 4 suffered explosions on March 15th, the blasts occurred within 6 minutes of each other. I think that this is odd. Perhaps it indicates that the explosions were somehow linked.

pdf status report from JAIF

Sunday, March 20, 2011

Why the Fukushima crisis has people worried

The images below have been adjusted to the same scale. One is an image of Northern Japan, and the other shows the size of the area affected by radiation from Chernobyl. The arrow indicates the location of the nuclear plant.

This only relates to long term health impacts. At Chernobyl, only workers at the site were affected by radiation sickness. The images show how bad a Chernobyl style release could be for the Japanese.

(Images from wikimaps and wikipedia)

The precise patterns of fallout would depend on wind directions and rain fall. Tokyo is the world's largest metropolitan area, with 35 million people.

Fortunately, the latest news from Japan is good. Major progress has been made in adding water to spent fuel ponds and restoring cooling. However, there is much still to be done.

This only relates to long term health impacts. At Chernobyl, only workers at the site were affected by radiation sickness. The images show how bad a Chernobyl style release could be for the Japanese.

(Images from wikimaps and wikipedia)

The precise patterns of fallout would depend on wind directions and rain fall. Tokyo is the world's largest metropolitan area, with 35 million people.

Fortunately, the latest news from Japan is good. Major progress has been made in adding water to spent fuel ponds and restoring cooling. However, there is much still to be done.

Friday, March 18, 2011

More gadgets for Fukushima

Might disaster be avoided?

AERIAL WATER TOWER / HYDRAULIC PLATFORM

I can't find data on the height of the spent fuel pond, but it appears to be less than 50 meters above the ground. Google Earth indicates that the original reactor buildings were 80 meters high. There are a few fire-fighting vehicles which can reach that high. Once positioned and aimed, the crew could abandon the vehicle, leaving it to deliver water to the spent fuel pond. If it could be connected to the Hytrans water supply system which I mentioned in my last post, then a continuous stream of water could be delivered to the spent fuel pond indefinitely. Set up time for these systems is of the order of minutes rather than hours.

The HLA from Finnish firm Bronto Skylift can deliver 3800 litres per minute (228 tonnes per hour) of water to over 100 meters above ground level. It also has substantial horizontal reach, allowing it to poke into the reactor building to reach the fuel pond. The pictures show it in operation.

I think this might just work, but the setup time will expose the crew to a substantial radiation dose.

http://www.bronto.fi/sivu.aspx?taso=1&id=125

FOX NBC RECONNAISSANCE VEHICLE

The M93 Fox is a vehicle used by the military to map areas affected by radioactive fallout and chemical contamination. If the worst happens in Japan, these vehicles and their crews will be in demand to map contaminated areas. The US military has a number of these vehicles.

http://en.wikipedia.org/wiki/M93_Fox

http://www.globalsecurity.org/military/systems/ground/m93a1.htm

BRADLEY FIGHTING VEHICLE

This is normally used to carry soldiers on the battlefield. They are equipped with ventilation systems which can filter out radioactive particles and protect the troops inside. Thick steel armor also shields the occupants from radiation. Bradleys could be used to transport people through contaminated areas.

http://en.wikipedia.org/wiki/Bradley_fighting_vehicle

GLOBAL HAWK UAV

This large, high flying unmanned airplane can keep the reactors under surveillance and warn of any changes. It has infra-red cameras which would be ideal for detecting fires and hot spots.

http://en.wikipedia.org/wiki/Northrop_Grumman_RQ-4_Global_Hawk

UPDATE: Saturday: The Japanese appear to be thinking along similar lines. the Yomiuri Shimbun newspaper reports that they are sending some fire trucks and a hose laying system from Tokyo. Quote:

The Fire Engine Photos website has a picture of one of the "Super Pumper" hose laying systems here. It belongs to the elite "Hyper Rescue" squad.

AERIAL WATER TOWER / HYDRAULIC PLATFORM

I can't find data on the height of the spent fuel pond, but it appears to be less than 50 meters above the ground. Google Earth indicates that the original reactor buildings were 80 meters high. There are a few fire-fighting vehicles which can reach that high. Once positioned and aimed, the crew could abandon the vehicle, leaving it to deliver water to the spent fuel pond. If it could be connected to the Hytrans water supply system which I mentioned in my last post, then a continuous stream of water could be delivered to the spent fuel pond indefinitely. Set up time for these systems is of the order of minutes rather than hours.

The HLA from Finnish firm Bronto Skylift can deliver 3800 litres per minute (228 tonnes per hour) of water to over 100 meters above ground level. It also has substantial horizontal reach, allowing it to poke into the reactor building to reach the fuel pond. The pictures show it in operation.

http://www.bronto.fi/sivu.aspx?taso=1&id=125

FOX NBC RECONNAISSANCE VEHICLE

The M93 Fox is a vehicle used by the military to map areas affected by radioactive fallout and chemical contamination. If the worst happens in Japan, these vehicles and their crews will be in demand to map contaminated areas. The US military has a number of these vehicles.

http://en.wikipedia.org/wiki/M93_Fox

http://www.globalsecurity.org/military/systems/ground/m93a1.htm

BRADLEY FIGHTING VEHICLE

This is normally used to carry soldiers on the battlefield. They are equipped with ventilation systems which can filter out radioactive particles and protect the troops inside. Thick steel armor also shields the occupants from radiation. Bradleys could be used to transport people through contaminated areas.

http://en.wikipedia.org/wiki/Bradley_fighting_vehicle

GLOBAL HAWK UAV

This large, high flying unmanned airplane can keep the reactors under surveillance and warn of any changes. It has infra-red cameras which would be ideal for detecting fires and hot spots.

http://en.wikipedia.org/wiki/Northrop_Grumman_RQ-4_Global_Hawk

UPDATE: Saturday: The Japanese appear to be thinking along similar lines. the Yomiuri Shimbun newspaper reports that they are sending some fire trucks and a hose laying system from Tokyo. Quote:

One large ladder truck is capable of spraying water from about 40 meters above the ground, while an elevating squirt truck can blast 3.8 tons of water per minute from a height of 22 meters by remote control, the fire department said. These high positions will allow water to be sprayed onto the storage pool, which is in the upper part of the reactor building. Other vehicles include a hose layer to extend hose lines. A hose layer carries 72 hoses, which can be connected to "Super Pumper" trucks to build a long-distance pumping system. The system is designed so water can be pumped from the sea or a river as much as two kilometers away to maintain a constant supply to the engines, according to the fire department.

The Fire Engine Photos website has a picture of one of the "Super Pumper" hose laying systems here. It belongs to the elite "Hyper Rescue" squad.

Thursday, March 17, 2011

Send in the robots!

I have noticed some people commenting elsewhere about sending in various types of robot to save the plant. So I looked around the web and found quite a few things that would be potentially useful.

UNMANNED K-MAX HELICOPTER

This is capable of lifting about 2 tons, and it would be ideal for water drops onto the reactor. In fact it would have a lot of potential uses in this situation, and hopefully Japan is being made aware of its capability. This was developed for airborne resupply of isolated soldiers. It dropped 16 payloads in recent tests. It does have a cockpit so it can be flown manned if necessary.

http://www.gizmag.com/k-max-unmanned-helicopter-milestones/17969/

RADIO CONTROLLED HELICOPTERS WITH CAMERAS

There are a huge variety of small radio controlled helicopters which could be flown into the reactor buildings to get a closer look at the damage. A big limitation is that they have to be within line of sight of the radio controller, and maximum range is about 300 meters. Endurance is about 20 minutes before the battery runs out.

Tiny wireless cameras are available for these things, with a mass of only 9 grams.

Probably the coolest of all these is the Dragenflyer X6 flying camera. It looks like something out of James Bond. Be sure to check out the Youtube video below.

http://inventorspot.com/articles/draganflyer_x6_camera_007_would_love_27309

US NAVY FIRESCOUT

This is a really nice robotic flying camera. Endurance is 8 hours.

http://en.wikipedia.org/wiki/Northrop_Grumman_MQ-8_Fire_Scout

ROBOT BULLDOZER

For clearing debris around the reactors, what about this 60 tonne robot bulldozer from Israel? The Israelis use it to clear roads of mines.

http://www.theregister.co.uk/2009/03/31/idf_robot_d9_revelations/

HYTRANS FIREWATER SUPPLY SYSTEM

This system can deliver 3000 litres per minute of water over several kilometers, using flexible hoses like the one in the picture. The Berkeley,CA fire department recently bought one for fighting fires after an earthquake. It can be rapidly deployed from trucks. Something like this could potentially provide a way to get water to the reactors and spent fuel ponds.

http://www.hytransfiresystem.com/

ROBOT FIRE ENGINE

The Japanese have developed a small robot fire engine called the Rainbow 5. If this could be lowered onto the roof of Reactor 3, maybe it could insert a hose into the spent fuel pond.

http://www.youtube.com/watch?v=KOSh8dNGzYo

BUT WILL THE ELECTRONICS SURVIVE?

One potential snag is that most of these gadgets depend on silicon microchips. These microchips are somewhat vulnerable to strong radiation, which can cause computer errors.

UNMANNED K-MAX HELICOPTER

This is capable of lifting about 2 tons, and it would be ideal for water drops onto the reactor. In fact it would have a lot of potential uses in this situation, and hopefully Japan is being made aware of its capability. This was developed for airborne resupply of isolated soldiers. It dropped 16 payloads in recent tests. It does have a cockpit so it can be flown manned if necessary.

http://www.gizmag.com/k-max-unmanned-helicopter-milestones/17969/

RADIO CONTROLLED HELICOPTERS WITH CAMERAS

There are a huge variety of small radio controlled helicopters which could be flown into the reactor buildings to get a closer look at the damage. A big limitation is that they have to be within line of sight of the radio controller, and maximum range is about 300 meters. Endurance is about 20 minutes before the battery runs out.

Tiny wireless cameras are available for these things, with a mass of only 9 grams.

Probably the coolest of all these is the Dragenflyer X6 flying camera. It looks like something out of James Bond. Be sure to check out the Youtube video below.

http://inventorspot.com/articles/draganflyer_x6_camera_007_would_love_27309

US NAVY FIRESCOUT

This is a really nice robotic flying camera. Endurance is 8 hours.

http://en.wikipedia.org/wiki/Northrop_Grumman_MQ-8_Fire_Scout

ROBOT BULLDOZER

For clearing debris around the reactors, what about this 60 tonne robot bulldozer from Israel? The Israelis use it to clear roads of mines.

http://www.theregister.co.uk/2009/03/31/idf_robot_d9_revelations/

HYTRANS FIREWATER SUPPLY SYSTEM

This system can deliver 3000 litres per minute of water over several kilometers, using flexible hoses like the one in the picture. The Berkeley,CA fire department recently bought one for fighting fires after an earthquake. It can be rapidly deployed from trucks. Something like this could potentially provide a way to get water to the reactors and spent fuel ponds.

http://www.hytransfiresystem.com/

ROBOT FIRE ENGINE

The Japanese have developed a small robot fire engine called the Rainbow 5. If this could be lowered onto the roof of Reactor 3, maybe it could insert a hose into the spent fuel pond.

http://www.youtube.com/watch?v=KOSh8dNGzYo

BUT WILL THE ELECTRONICS SURVIVE?

One potential snag is that most of these gadgets depend on silicon microchips. These microchips are somewhat vulnerable to strong radiation, which can cause computer errors.

Wednesday, March 16, 2011

Fukushima: Japanese for Chernobyl

What's the big picture here? Deep within the radioactive ruins of the Fukushima

Daiichi nuclear complex, there lie four swimming pool like structures. These are

the spent fuel ponds. These are full of used fuel rods from the nuclear reactors.

Spent fuel must be kept covered with water to absorb the radiation from it and

keep it cool.

Heat from the spent fuel is slowly evaporating the water in the ponds. Under

normal circumstances topping up the water level would not be a problem, but the

situation at Fukushima is not normal. Over the past few days, the reactor

buildings have been ripped apart by hydrogen explosions. The ponds are located on

the upper levels in the buildings, and normal access routes to them are probably

destroyed or blocked by debris. The blasts may have cracked the concrete

structure of the ponds themselves, causing water leaks. Piping and

instrumentation cables leading to the ponds have probably been destroyed.

What makes this much worse is that the hydrogen explosions have also punctured at

least two of the reactor containments. The whole Fukushima site is now covered in

dangerous radiation, and levels near the reactors are reported to be lethal.

The bottom line here us that there little chance that the plant workers will be

able to maintain the water levels in the fuel rods. That fuel is going to be

exposed to the air.

What happens then? The fuel will heat up, and the metal cladding will react with

the water to liberate hydrogen. This will lead to further hydrogen explosions. At

some point the water will all boil away. Fuel temperatures will then rise much

further.

Expert opinion differs on what happens next. Some experts say that the fuel will

not catch fire. Others say that the fuel will burn, and I strongly agree with

that. Put a large pile of flammable stuff in a metal lined basin which tends to

retain heat, then raise the temperature, and something is going to ignite.

Experts I have read seem to agree that a spent fuel pond fire is one of the worst

things that can happen in a nuclear plant. It is as bad as a core meltdown, if

not worse. It will release huge amounts of radiation into the air, which will

then go where ever the winds take it.

Major spent fuel pond fires are now all but inevitable. This will be every bit as

bad as Chernobyl. That is why the French are telling their citizens to leave the

country, and why the US is advising Americans to stay 50 miles away.

ARE THE PONDS ALREADY BURNING?

Some US experts believe that the water in the spent fuel pond at Reactor 4 has

already gone. The reactor building has been blown apart by a hydrogen explosion,

and they think that the exposed fuel rods are the source of the hydrogen. There

have been signs of a fire inside the building.

I think the US experts are wrong. The spent fuel pond is at the top of the

structure. Hydrogen tends to rise, and it would not sink down into the lower

levels of the building. Hydrogen from the fuel ponds would blow the roof off, but

would not produce the kind of damage visible in building 4. The hydrogen that

blew up building 4 came from somewhere else. However it is possible that the

explosion damaged the pond and that all the water leaked out.

The Japanese say that the water is still there and that the temperature is 86

Celsius, far above normal. I suspect that the Japanese are correct, but it

doesn't really make much difference. There is no feasible way to top the water

up.

Daiichi nuclear complex, there lie four swimming pool like structures. These are

the spent fuel ponds. These are full of used fuel rods from the nuclear reactors.

Spent fuel must be kept covered with water to absorb the radiation from it and

keep it cool.

Heat from the spent fuel is slowly evaporating the water in the ponds. Under

normal circumstances topping up the water level would not be a problem, but the

situation at Fukushima is not normal. Over the past few days, the reactor

buildings have been ripped apart by hydrogen explosions. The ponds are located on

the upper levels in the buildings, and normal access routes to them are probably

destroyed or blocked by debris. The blasts may have cracked the concrete

structure of the ponds themselves, causing water leaks. Piping and

instrumentation cables leading to the ponds have probably been destroyed.

What makes this much worse is that the hydrogen explosions have also punctured at

least two of the reactor containments. The whole Fukushima site is now covered in

dangerous radiation, and levels near the reactors are reported to be lethal.

The bottom line here us that there little chance that the plant workers will be

able to maintain the water levels in the fuel rods. That fuel is going to be

exposed to the air.

What happens then? The fuel will heat up, and the metal cladding will react with

the water to liberate hydrogen. This will lead to further hydrogen explosions. At

some point the water will all boil away. Fuel temperatures will then rise much

further.

Expert opinion differs on what happens next. Some experts say that the fuel will

not catch fire. Others say that the fuel will burn, and I strongly agree with

that. Put a large pile of flammable stuff in a metal lined basin which tends to

retain heat, then raise the temperature, and something is going to ignite.

Experts I have read seem to agree that a spent fuel pond fire is one of the worst

things that can happen in a nuclear plant. It is as bad as a core meltdown, if

not worse. It will release huge amounts of radiation into the air, which will

then go where ever the winds take it.

Major spent fuel pond fires are now all but inevitable. This will be every bit as

bad as Chernobyl. That is why the French are telling their citizens to leave the

country, and why the US is advising Americans to stay 50 miles away.

ARE THE PONDS ALREADY BURNING?

Some US experts believe that the water in the spent fuel pond at Reactor 4 has

already gone. The reactor building has been blown apart by a hydrogen explosion,

and they think that the exposed fuel rods are the source of the hydrogen. There

have been signs of a fire inside the building.

I think the US experts are wrong. The spent fuel pond is at the top of the

structure. Hydrogen tends to rise, and it would not sink down into the lower

levels of the building. Hydrogen from the fuel ponds would blow the roof off, but

would not produce the kind of damage visible in building 4. The hydrogen that

blew up building 4 came from somewhere else. However it is possible that the

explosion damaged the pond and that all the water leaked out.

The Japanese say that the water is still there and that the temperature is 86

Celsius, far above normal. I suspect that the Japanese are correct, but it

doesn't really make much difference. There is no feasible way to top the water

up.

Tuesday, March 15, 2011

Radioactive steam leaking at Fukushima

Various reports on the web indicate high radiation levels near Reactor 3. The media is reporting that radioactive steam is venting from that reactor and that containment has been lost.

This satellite picture shows Reactor 3 just after it exploded. There is steam venting clearly visible. This venting is almost certainly not a design feature. It is coming from an area which has been totally destroyed by the blast. I suspect it shows a breach in containment which was caused by the explosion. Various reports on the web indicate high radiation levels near Reactor 3.

There have been many reports of intentional venting of the containment. When the plant does that, the gas is passed through filters in order to remove as much radiation as possible. Then it is emitted from a tall smokestack.

A LITTLE GOOD NEWS

Latest reports are that the fire in Reactor 4 had nothing to do with spent fuel. It is now said that an oil leak was to blame.

UPDATE: There are conflicting reports on the fire.

UPDATE: Latest photographs make it clear that Reactor 4 has suffered a massive explosion. The damage is almost as bad as Reactor 3. I wonder if hydrogen from #3 somehow got into #4, perhaps via the ventilation system?

This satellite picture shows Reactor 3 just after it exploded. There is steam venting clearly visible. This venting is almost certainly not a design feature. It is coming from an area which has been totally destroyed by the blast. I suspect it shows a breach in containment which was caused by the explosion. Various reports on the web indicate high radiation levels near Reactor 3.

There have been many reports of intentional venting of the containment. When the plant does that, the gas is passed through filters in order to remove as much radiation as possible. Then it is emitted from a tall smokestack.

AN EVEN BIGGER PROBLEM?

Somewhere in the radioactive ruins of Reactor 3 there is a swimming pool like tank called a spent fuel pond. It has been reported that there is some fuel in the pond. Such ponds often contain many reactors worth of radiation.

Heat from the spent fuel evaporates the water, and under normal circumstances several percent a day of the volume would be lost. If the explosion has damaged the tank and it is leaking then levels could fall more quickly. If all the water were to be lost, then the fuel rods would overheat and probably catch fire. That would lead to a Chernobyl style release of radiation.

It is essential that the water in this pool is kept topped up, but how will this be done? The pool is high up in the reactor structure, in an area subject to intense radiation. Normal access routes to the pool may be impassable. Pipes feeding the pool may have been destroyed in the explosion.

Here is the question. Do the Japanese know what the water level is, or were the instrument cables destroyed in the explosion? Do they have the ability to top up the water level?

If not, then Japan has a very big problem.

Latest reports are that the fire in Reactor 4 had nothing to do with spent fuel. It is now said that an oil leak was to blame.

UPDATE: There are conflicting reports on the fire.

UPDATE: Latest photographs make it clear that Reactor 4 has suffered a massive explosion. The damage is almost as bad as Reactor 3. I wonder if hydrogen from #3 somehow got into #4, perhaps via the ventilation system?

Monday, March 14, 2011

Nuclear disaster goes from bad to worse

The Fukushima disaster is now spinning out of control.

REACTOR 2 BREACHES CONTAINMENT

The explosion yesterday from Reactor 3 destroyed most of the pumps that were cooling Reactor 2. Reactor 2 started down the now familiar path of falling water levels, exposed fuel rods, fuel melting, and hydrogen generation.

However, this time the result has been a breach of the containment. At the base of the reactor there is a big circular steel tube called the "Wet well". This has the shape of a donut or torus, and it is normally partly filled with water. It is connected to the "Dry Well", which contains the reactor, by big steel tubes.

The Japanese now believe that this is leaking, maybe as a result of too much internal pressure, or maybe as the result of a hydrogen explosion.

The Wet well and the Dry Well together make up the containment. Both must stay intact to contain radiation if a meltdown occurs.

SPENT FUEL PONDS

When I was annotating this diagram for the last post, I was wondering about something that looked like a spent fuel storage pond sitting at the top of the reactor. I was hoping that it was just temporary storage, which would be empty.

Today other people have started commenting on it, so I have marked it in tonight's post. It now seems as if there may be a lot of spent fuel stored in that pond.

It is in an extremely vulnerable position, right at the top of the reactor. Fortunately, the hydrogen explosions have so far blown away surrounding structure while leaving the spent fuel storage ponds intact. If the fuel was to be ejected from the pond it would subject the whole site to intense radiation which would be immediately detectable. If exposed to air, the fuel would probably also catch fire. This would release large amounts of radiation.

The fuel generates heat, and it will tend to boil water out of the pool. The pool needs to kept topped up.

WHAT IS SPENT FUEL?

When fuel is removed from a reactor it is extremely radioactive and still producing significant amounts of heat. A large crane lifts the fuel out of the reactor vessel and lowers it into a deep tank of water. This is the spent fuel pool. The water blocks the radiation, and keeps the fuel cool.

In time, the radiation and heat production drops. Eventually, the fuel is put into a large metal container, lifted out of the pool by a crane, and lowered onto a railway car for transport.

REPORTS OF FIRE IN REACTOR FOUR

This is odd, because Reactor Four was supposed to be shut down for maintenance. It is possible that some supplies left behind by the maintenance workers caught fire. Things like electrical cables can burn, and there was a serious fire at Brown's Ferry many years ago which was started by a maintenance worker.

A far more disturbing possibility is that the spent fuel is burning. That might happen if all the water in the spent fuel pool boiled away. If fuel is burning, large amount of radiation will be detected.

REACTOR 2 BREACHES CONTAINMENT

The explosion yesterday from Reactor 3 destroyed most of the pumps that were cooling Reactor 2. Reactor 2 started down the now familiar path of falling water levels, exposed fuel rods, fuel melting, and hydrogen generation.

However, this time the result has been a breach of the containment. At the base of the reactor there is a big circular steel tube called the "Wet well". This has the shape of a donut or torus, and it is normally partly filled with water. It is connected to the "Dry Well", which contains the reactor, by big steel tubes.

The Japanese now believe that this is leaking, maybe as a result of too much internal pressure, or maybe as the result of a hydrogen explosion.

The Wet well and the Dry Well together make up the containment. Both must stay intact to contain radiation if a meltdown occurs.

SPENT FUEL PONDS

When I was annotating this diagram for the last post, I was wondering about something that looked like a spent fuel storage pond sitting at the top of the reactor. I was hoping that it was just temporary storage, which would be empty.

Today other people have started commenting on it, so I have marked it in tonight's post. It now seems as if there may be a lot of spent fuel stored in that pond.

It is in an extremely vulnerable position, right at the top of the reactor. Fortunately, the hydrogen explosions have so far blown away surrounding structure while leaving the spent fuel storage ponds intact. If the fuel was to be ejected from the pond it would subject the whole site to intense radiation which would be immediately detectable. If exposed to air, the fuel would probably also catch fire. This would release large amounts of radiation.

The fuel generates heat, and it will tend to boil water out of the pool. The pool needs to kept topped up.

WHAT IS SPENT FUEL?

When fuel is removed from a reactor it is extremely radioactive and still producing significant amounts of heat. A large crane lifts the fuel out of the reactor vessel and lowers it into a deep tank of water. This is the spent fuel pool. The water blocks the radiation, and keeps the fuel cool.

In time, the radiation and heat production drops. Eventually, the fuel is put into a large metal container, lifted out of the pool by a crane, and lowered onto a railway car for transport.

REPORTS OF FIRE IN REACTOR FOUR

This is odd, because Reactor Four was supposed to be shut down for maintenance. It is possible that some supplies left behind by the maintenance workers caught fire. Things like electrical cables can burn, and there was a serious fire at Brown's Ferry many years ago which was started by a maintenance worker.

A far more disturbing possibility is that the spent fuel is burning. That might happen if all the water in the spent fuel pool boiled away. If fuel is burning, large amount of radiation will be detected.

Another Japanese reactor building explodes

This was rather more spectacular than the first. This building contained a larger reactor, which likely produced more hydrogen gas. Parts of the building were flung high into the sky. Fortunately, radiation levels remain stable.

Video from sky news

These reactor buildings are of the General Electric Mark 1 design. This dates from the late 1960s and it has faced a lot of criticism from anti-nuclear groups. It hasn't been legal for new construction since the 1970s.

A lot went wrong at Three Mile Island, but one of the success stories is that the containment building worked. That building also faced a hydrogen explosion, but the explosion was contained within the structure and the building survived intact.

Here is a diagram of the Mark 1 containment, similar to the ones at Fukushima. You can recognize the square shape of the building. It can accommodate several different sizes of reactor. The containment vessel is within the building. It has the shape of an upside down light bulb. It seems that the containment vessel is still intact, although some of the concrete structures around it have been blown away by the hydrogen explosion.

One of the questions that needs to be asked is if a more modern design would have performed better. The latest containments, if I remember correctly, can survive about 200 atmospheres of pressure. The Japanese kept the pressure in this one under 8 atmospheres.

The image below is one of those pictures which really tells a story. On the left is a before picture of the plant. Note all that equipment close to the ocean! On the right is a picture taken after the tsunami. Note that a lot of stuff isn't there anymore! This plant was massively damaged by the tsunami, and it is no surprise that it is in big trouble. There is a much better interactive version of this picture here. Scroll down their page to find it.

Video from sky news

These reactor buildings are of the General Electric Mark 1 design. This dates from the late 1960s and it has faced a lot of criticism from anti-nuclear groups. It hasn't been legal for new construction since the 1970s.

A lot went wrong at Three Mile Island, but one of the success stories is that the containment building worked. That building also faced a hydrogen explosion, but the explosion was contained within the structure and the building survived intact.

Here is a diagram of the Mark 1 containment, similar to the ones at Fukushima. You can recognize the square shape of the building. It can accommodate several different sizes of reactor. The containment vessel is within the building. It has the shape of an upside down light bulb. It seems that the containment vessel is still intact, although some of the concrete structures around it have been blown away by the hydrogen explosion.

One of the questions that needs to be asked is if a more modern design would have performed better. The latest containments, if I remember correctly, can survive about 200 atmospheres of pressure. The Japanese kept the pressure in this one under 8 atmospheres.

The image below is one of those pictures which really tells a story. On the left is a before picture of the plant. Note all that equipment close to the ocean! On the right is a picture taken after the tsunami. Note that a lot of stuff isn't there anymore! This plant was massively damaged by the tsunami, and it is no surprise that it is in big trouble. There is a much better interactive version of this picture here. Scroll down their page to find it.

Obviously this was a very extreme event. One of the lessons that needs to be learned here is that the prediction of geological hazards is an imprecise business, and that a safety factor should be applied above and beyond the earth scientist's worse case projections.

Saturday, March 12, 2011

Bad News

Reports from Japan indicate an explosion and smoke coming out of their nuclear plant.

OK, smoke coming out of a troubled nuclear plant does not sound like good news. It might be steam, which isn't good news either. If hot molten metal drops into a pool of water, the result can be a steam explosion. A melted down core would be hot molten metal.

This may very well mean that the containment has been breached.

OK, I just found some video and it looks like a BIG explosion. NHK is reporting that the walls and roof of a building at the site have collapsed. This looks like a Chernobyl style release. The only good news here is that the cloud seems to be heading out to sea.

1.17am Well at least California is 5000 miles east of Japan. I was in Europe in 1986 when Chernobyl blew, and it wasn't all that big a deal in Britain. Some milk and cheese had to be thrown out, but we didn't all get irradiated. Hopefully this cloud will disperse out over the Pacific without bothering anybody. I'm sure the Pentagon has a program to predict nuclear fallout, and they might want to get that fired up and try to predict where this cloud is headed.

1.50am I remember seeing this a couple of hours ago: 'Japan's nuclear agency says radioactive cesium is detected in the air near one plant'

When Uranium reacts in a nuclear reactor Cesium is one of the products.

http://en.wikipedia.org/wiki/Caesium-137

If this stuff is coming out it probably means severe heat damage to the fuel, which could mean that it has melted.

( Edit, Sunday, March 13 : Fortunately no major radiation release resulted from this event, but it looked really bad at the time. 'Explosion' and 'Nuclear Reactor' are words you don't want to see in the same sentence!)

OK, smoke coming out of a troubled nuclear plant does not sound like good news. It might be steam, which isn't good news either. If hot molten metal drops into a pool of water, the result can be a steam explosion. A melted down core would be hot molten metal.

This may very well mean that the containment has been breached.

OK, I just found some video and it looks like a BIG explosion. NHK is reporting that the walls and roof of a building at the site have collapsed. This looks like a Chernobyl style release. The only good news here is that the cloud seems to be heading out to sea.

1.17am Well at least California is 5000 miles east of Japan. I was in Europe in 1986 when Chernobyl blew, and it wasn't all that big a deal in Britain. Some milk and cheese had to be thrown out, but we didn't all get irradiated. Hopefully this cloud will disperse out over the Pacific without bothering anybody. I'm sure the Pentagon has a program to predict nuclear fallout, and they might want to get that fired up and try to predict where this cloud is headed.

1.50am I remember seeing this a couple of hours ago: 'Japan's nuclear agency says radioactive cesium is detected in the air near one plant'

When Uranium reacts in a nuclear reactor Cesium is one of the products.

http://en.wikipedia.org/wiki/Caesium-137

If this stuff is coming out it probably means severe heat damage to the fuel, which could mean that it has melted.